Scientists produce an interactive programme that aids complex robots with motion planning for environments containing obstacles.

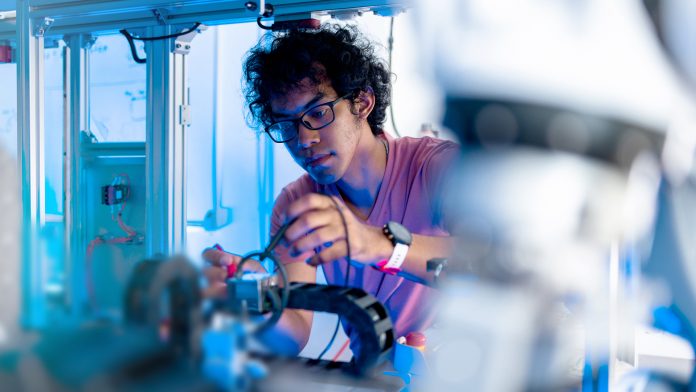

Computer scientists at Rice University have developed a method that allows humans to help complex robots build efficient solutions to ‘see’ their environments and conduct tasks by improving their mobility.

The strategy labelled ‘Bayesian Learning in the Dark’ (BLIND) is a novel solution to the long-standing problem of motion planning for robots that both work in and have to navigate environments where everything is not always clearly visible.

Making robotic movement safer

The peer-reviewed study, led by computer scientists from Rice University’s George R. Brown School of Engineering, was presented at the Institute of Electrical and Electronics Engineers International Conference on Robotics and Automation in late May 2022.

The algorithm was developed primarily by Carlos Quintero-Peña and Constantinos Chamzas, who are both graduate students working with Kavraki. The algorithm is designed to keep a human in the loop to “augment robot perception and, importantly, prevent the execution of unsafe motion,” according to the study.

To do so, they combined Bayesian inverse reinforcement learning – by which a system learns from experience and information that is continually updated – with established motion planning techniques to assist robots that have freedom in their movement, which means they contain a lot of moving parts.

Testing the ‘Bayesian Learning In the Dark’ (BLIND) strategy

To test BLIND and how it succeeded at improving robots mobility, the Rice lab directed a Fetch robot, an articulated arm with seven joints, to grab a small cylinder from a table and move it to another, but in doing so it had to move past a barrier.

“If you have more joints, instructions to the robot are complicated,” Quintero-Peña explained. “If you are directing a human, you can just say, ‘lift up your hand.'”

However, a robot’s programmes are required to be specific about the movement of each joint at each point in its trajectory, especially when obstacles block the machine’s view of its target to safely complete its objective.

Thus, rather than programming a trajectory up front, BLIND inserts a human mind-process to refine the choreographed options that are suggested by the robot’s algorithm. “BLIND allows us to take information in the human’s head and compute our trajectories in this high-degree-of-freedom space,” Quintero-Peña said.

“We use a specific way of feedback called critique, which is a binary form of feedback where the human is given labels on pieces of the trajectory.”

These labels appear as connected green dots that represent possible paths. As BLIND steps from dot to dot, the human approves or rejects each movement to refine the path, avoiding obstacles as efficiently as possible.

“It is an easy interface for people to use, because we can say, ‘I like this’ or ‘I do not like that,’ and the robot uses this information to plan,” Chamzas said. Once rewarded with an approved set of movements, the robot can carry out its task efficiently.

Simplifying human-robot dynamics

“One of the most important things here is that human preferences are hard to describe with a mathematical formula,” Quintero-Peña said. “Our work simplifies human-robot relationships by incorporating human preferences. That is how I think applications will get the most benefit from this work.”

“This work wonderfully exemplifies how a little, but targeted, human intervention can significantly enhance the capabilities of robots to execute complex tasks in environments where some parts are completely unknown to the robot but known to the human,” concluded Kavraki, a robotics pioneer whose resume includes advanced programming for NASA’s humanoid Robonaut aboard the International Space Station.

“It shows how methods for human-robot interaction, the topic of research of my colleague Professor Unhelkar, and automated planning pioneered for years at my laboratory can blend to deliver reliable solutions that also respect human preferences.”